Disabling your integrated graphics card is pretty simple. However, in most cases, you do not have to disable the integrated graphics card yourself.

If you have a dedicated graphics card installed, the system automatically handles disabling/switching the graphics cards.

Alternatively, if you DO NOT have a dedicated graphics card installed, then disabling the integrated graphics card would result in a far reduced graphics processing performance.

In the following text, I will discuss how to disable integrated graphics, whether you should disable it, and how the system generally handles the switching if you have integrated and dedicated GPUs.

TABLE OF CONTENTS

How to Disable Integrated Graphics Card?

There are two basic ways to disable integrated graphics cards on your PC.

- Through the Device Manager

- Through BIOS

Method 1: Disabling Integrated Graphics Card Through Device Manager

The first and the more intuitive method to disable integrated graphics cards is to use the Device Manager.

The following is a video tutorial for this method.

Here are the steps if you do not wish to watch the video above:

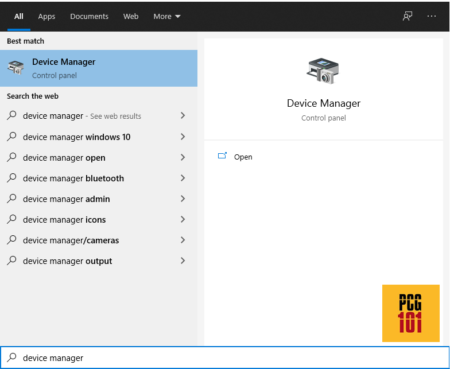

Step 1: Click Search on the Windows Taskbar.

Step 2: Search for “Device Manager.” You can also access the device manager through Control Panel -> Device Manager.

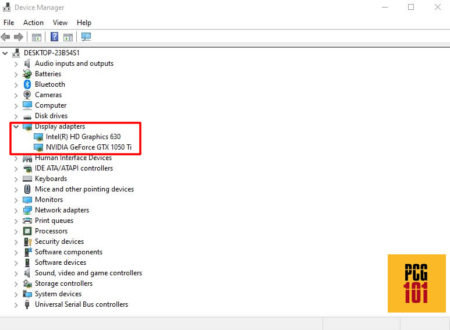

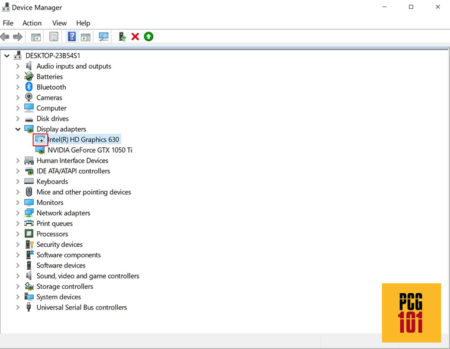

Step 3: Once the Device Manager Window opens, expand the “Display Adapters” section and check what graphics card it displays.

Since I have both an integrated and a dedicated GPU, the section displays two graphics cards.

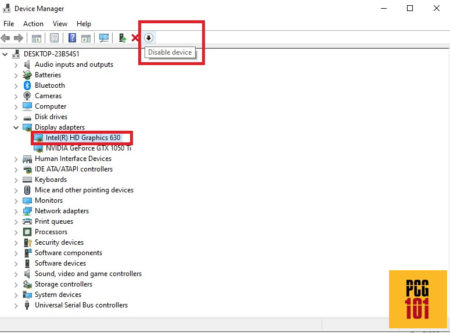

Step 4: Select the integrated graphics card and press the disable button. Ensure you have pressed the DISABLE button and NOT the Uninstall (X) button.

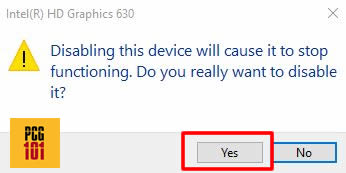

Step 5: A prompt will appear with a warning. Select “Yes.”

Subsequently, the screen may go blank for a few seconds.

When the display returns, you will notice a disabled icon next to the iGPU you disabled.

Also Read: Can You Replace an Integrated Graphics Card?

How to Tell Which One is Integrated and Which One is Dedicated GPU?

If you have a dedicated and integrated GPU installed, then for the newbies, it may be hard to tell which one of the two is integrated.

For instance, I have an Intel HD Graphics 630 and an NVIDIA GTX 1050Ti on my system. But which one of the two is iGPU?

If you have basic knowledge regarding the graphics card market, you would be quick to tell that the Intel HD Graphics 630 is the iGPU, whereas the NVIDIA GeForce GTX 1050Ti is the dedicated GPU.

All Intel GPUs, i.e., Intel HD graphics, Intel UHD graphics, and Intel IRIS graphics cards, are iGPUs. Whereas all NVIDIA GPUs are dedicated graphics cards.

With AMD, things can be difficult for the uninitiated as AMD makes both dedicated and integrated GPUs.

If you are new and unsure about which GPU is integrated, it is better just to Google the make and model of the GPU, as shown on the Device Manager online.

Also Read: Does Ryzen Have Integrated Graphics?

Would Disabling the iGPU in Device Manager Automatically Switch Your System to a Dedicated GPU Permanently?

Often people believe that disabling the iGPU through the Device Manager will permanently switch their system to the dedicated GPU.

That is hardly the case.

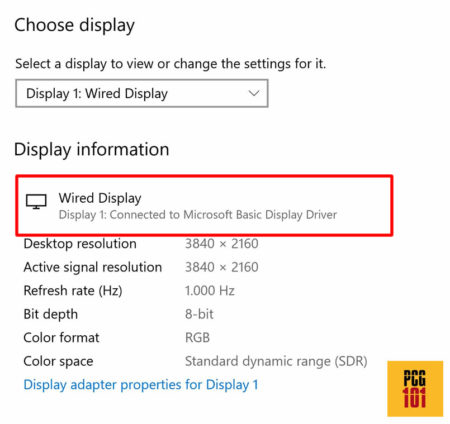

When you disable the iGPU (on laptops), your system switches to software-based video processing through the CPU via a Microsoft Basic Display Driver.

This results in a far reduced performance!

To permanently switch your PC from an iGPU to a dedicated GPU, you must do it through BIOS.

Also Read: Do You Need Two Graphics Cards for Dual Monitors?

Method 2: Disabling Integrated Graphics Through BIOS

The other method of disabling integrated graphics cards is to use BIOS. This method is a bit more difficult for newbies.

For starters, note that the BIOS version may significantly differ from one PC to another; therefore, the BIOS menu on my PC may not be similar to yours.

Additionally, specific BIOS versions, particularly those on laptops, are so heavily stripped of basic settings that you may not find the menu for disabling the iGPU.

Step 1: Access BIOS

On PC startup, press the correct key for accessing BIOS. This key may differ for different PCs. Often it is “Delete,” “F10,” or the “F12” key.

Step 2: Search for Settings Regarding Display

Once in BIOS, you must search for settings regarding the Integrated Graphics, Integrated Video, Integrated VGA, or general graphics settings.

The settings may be under the label Onboard Devices, Built-in Devices, or something similar.

You may have to go into the “Advanced” settings for this.

Step 3: Disable the iGPU / Select Discrete Graphics

Once you have located the correct settings, disable the iGPU and save and exit BIOS.

In some cases, the settings may also give you the option to select between “Auto,” “Discrete” (Dedicated), or “Integrated” graphics card. Choose the setting “Discrete” graphics card.

Note that tampering with the settings in BIOS can result in unwanted results and issues. Therefore, it is not recommended, mainly if you doubt whether you have located the correct settings for the iGPU.

Also Read: Do I Need Integrated Graphics?

Is It Safe to Disable Integrated Graphics?

It is not recommended to disable the integrated graphics.

This isn’t much of an issue on desktops, as the iGPU automatically gets disabled when the dedicated GPU is plugged in.

However, disabling the integrated graphics on laptops will result in software-based video processing using Microsoft Basic Display Driver.

Since Microsoft Basic Display Driver uses the CPU for video processing, you will see a far reduced performance.

However, if you have a working, dedicated GPU installed, disabling the iGPU on a laptop is generally safe if it is done through BIOS. It is often not necessary, though.

Also Read: Is Integrated Graphics Card Good Enough?

Do You Need to Disable Integrated Graphics Card if You Have Dedicated Graphics?

As mentioned earlier, disabling the iGPU on a laptop isn’t necessary if you have a dedicated graphics card because the system automatically switches the graphics card on laptops.

Again, this isn’t a concern on desktops, as the BIOS disables the iGPU when a dedicated GPU is plugged in. There is no dynamic switching of GPU on desktops.

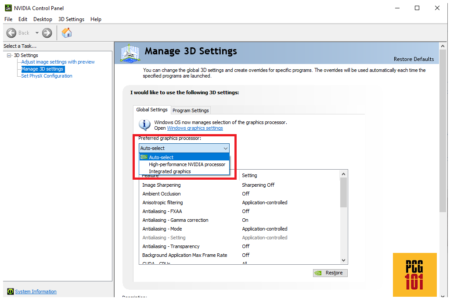

On laptops, for instance, in the NVIDIA Control Panel, you can select one of three “Preferred Graphics Processor” settings:

- Auto-Select – Default Option / Recommended Option

- High-Performance Nvidia Processor – for using dedicated GPU only for all applications

- Integrated Graphics – For using iGPU for all applications.

Here you can see that with the “Auto-Select” option, the system automatically decides when to use the integrated GPU and the dedicated GPU depending upon your workload.

So, for instance, when doing less graphics-intensive work like browsing, watching YouTube videos, and writing reports, the system would use the integrated graphics card.

However, when gaming or using heavier editing and designing software, the system automatically switches to the dedicated graphics card.

This arrangement helps save up on your energy bills. Particularly on laptops, it also helps in saving up on essential battery life. Having the iGPU disabled and the dedicated GPU running constantly can tax the battery.

The Motherboard I/O Ports Will Not Work with iGPU Disabled (Applicable to Desktops)

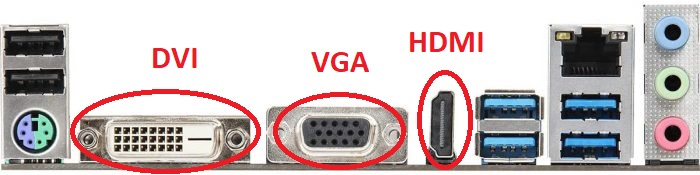

It would be best if you had guessed it already, but the I/O ports on your motherboard’s back are connected to the iGPU.

If you disabled the iGPU, the motherboard’s I/O ports would NOT work.

It is also worth mentioning that when you have a dedicated GPU installed, the I/O ports located on the motherboard get automatically disabled on desktops.

There is a good reason for this. A monitor connected to the motherboard’s video out port receives graphics processing juice from the iGPU. Therefore, if you were to game on this monitor, it would seriously lag even if you have a powerful dedicated GPU installed separately.

To experience the power of the dedicated graphics card, you need to have the monitor connected to the video output ports of the dedicated graphics card.

Also Read: Can I Upgrade My Laptop Graphics Card?

You Can Also Have Both Integrated and Dedicated GPUs Enabled At The Same Time on Desktops

It is also possible to have both iGPU and the dedicated GPU working simultaneously on desktops.

This would allow you to use both the motherboard’s video ports and the graphics card’s video ports at the same time.

This method is excellent for multiple monitor display setups for office work. However, for gaming and an intensive professional career, you will face the same issue as highlighted above, i.e., the monitors connected to the motherboard’s video ports will have a far reduced performance compared to those related to the dedicated graphics card’s video ports.

In other words, if you are a gamer, you will have a much higher frame per second on the monitor connected to the dedicated GPU compared to the motherboard’s video port.

To enable both dedicated and iGPU at the same time, I have written a comprehensive tutorial here:

How to Use Motherboard HDMI with Dedicated Graphics Card?

Also Read: Does Your PC Need a Graphics Card If It’s Not For Gaming?

FREQUENTLY ASKED QUESTIONS

1. Does Disabling an Integrated Graphics Card Improve Performance?

If you disable an integrated graphics card, it will positively affect your laptop’s battery life. Graphics cards use a lot of battery, and removing them means that you computer will be able to run faster. Disabling the iGPU doesn’t have any effect on a desktop, but on a laptop, the colors and visuals get sharper and more vibrant.

2. What Happens if You Disable All Of Your Graphics Cards?

If you disable both the iGPU and the dedicated GPU on a lap, you screen won’t go blank. However, software-based rendering that’s done through the CPU will take over instead. This type of rendering is weak and can result in performance lags.

3. What are the potential risks or downsides to disabling an integrated graphics card?

Disabling an integrated graphics card may result in some potential risks or downsides.

One risk is that you may not be able to use your computer at all if the dedicated graphics card malfunctions or is not compatible with your system.

Another downside is that disabling the integrated graphics card may reduce the resale value of your computer, as some buyers prefer systems with both integrated and dedicated graphics options.

4. Can I disable an integrated graphics card without affecting the performance or stability of my computer?

Disabling an integrated graphics card may affect the performance or stability of your computer, especially if you’re running programs or games that require graphics-intensive processing. This can cause your system to slow down, freeze, or crash, and may also affect the quality of video playback or display output.

5. How do I disable an integrated graphics card in the BIOS or UEFI settings?

The process for disabling an integrated graphics card in the BIOS or UEFI settings can vary depending on your system manufacturer and model.

In general, you’ll need to enter the BIOS or UEFI settings by pressing a key or combination of keys during startup, then navigate to the “Integrated Peripherals” or “Chipset” settings menu.

From there, you should be able to locate the option to disable the integrated graphics card, which may be labeled as “Onboard VGA” or “Internal Graphics.”

Note that some systems may not allow you to disable the integrated graphics card entirely, and may instead offer the option to prioritize the dedicated graphics card over the integrated card.